Table of Contents

When identical or substantially similar content, i.e., duplicate content, exists across multiple web pages, search engines may struggle to determine the most relevant page to display in search results. This often poses a significant challenge for website owners who spend hours creating original content. For various reasons, this duplicate content ends up diluting the ranking for the affected pages and affecting overall search engine optimization. So, how do you fix duplicate content?

Understanding the varied sources of duplicate content is crucial for effective resolution. Whether from URL variations, printer-friendly pages, or content syndication, each instance demands a tailored solution. Navigating these challenges is essential to maintaining a website’s search engine ranking and ensuring a positive user experience.

In this blog post, we’ll delve into the primary causes of duplicate content, providing readers with the answer to their question, “How do you fix duplicate content?”. We’ll also discuss the practical steps and best practices for resolving the issues, offering actionable insights for website owners and content creators. We’ll also introduce readers to Multicollab, a tool to help site owners level up their overall content strategy while avoiding duplicate content issues.

Addressing the Risks of Duplicate Content

Duplicate content is a prevalent issue that can significantly impact a site’s SEO performance and overall content visibility. Search engines, tasked with delivering the most relevant and diverse search results, encounter challenges when confronted with duplicate content. This ambiguity hampers their ability to determine which version of the content should be indexed and displayed in search results.

First and foremost, it results in compromised rankings for all versions of the content as they compete against each other for search engine attention. Moreover, link metrics such as trust, authority, and link equity become diluted across the various iterations of the content.

Most crucially, duplicate content leads to reduced visibility. Search engines may choose to index or display only one version they perceive as the primary or most relevant, leaving other versions in obscurity. This affects your content’s reach and hinders the potential organic traffic and audience engagement that each version could generate.

In the subsequent sections, we will explore strategies and tools to effectively tackle these risks, ensuring a robust SEO strategy and optimal content performance.

Common Causes of Duplicate Content

Duplicate content issues can arise from various technical setup issues and human-driven factors. Understanding these causes is crucial for implementing practical solutions and maintaining a robust online presence.

1. Inconsistent URL Structure:

Search engines may index multiple variations of the same content if URLs exhibit differences such as:

- Non-www vs www:

- Non-www: http://example.com/page

- www: http://www.example.com/page

- HTTP vs HTTPS:

- HTTP: http://www.example.com/page

- HTTPS: https://www.example.com/page

- Variations in URL case sensitivity:

- Lowercase: https://www.example.com/page

- Uppercase: https://www.example.com/PAGE

- Presence or absence of a trailing slash:

- With trailing slash: https://www.example.com/page/

- Without trailing slash: https://www.example.com/page

- Index URL: https://www.example.com/page

- Filter URL:

- Filtered by date: https://www.example.com/page?filter=date

- Filtered by relevance: https://www.example.com/page?filter=relevance

- Taxonomy (Category) URL:

- Category A: https://www.example.com/categoryA/page

- Category B: https://www.example.com/categoryB/page

- Tracking URL:

- Original URL: https://www.example.com/page

- Tracking parameter added: https://www.example.com/page?utm_source=tracking

2. Indexing of Additional URLs:

Various additional URLs, including index, filter, taxonomy (e.g., category, tag), and tracking URLs, may lead to the same page being indexed under multiple URLs. This can confuse search engines, impacting the accurate assessment of the page’s relevance. Here are some examples:

- Paginated Content: Paginated content, such as blog posts with multiple pages of comments, may be indexed separately, potentially diluting the content’s visibility and search engine ranking.

- First Page of the Blog Post:

- https://www.exampleblog.com/post-title

- Paginated Pages of Comments:

- Page 2: https://www.exampleblog.com/post-title/page/2

- Page 3: https://www.exampleblog.com/post-title/page/3

- First Page of the Blog Post:

- Regional Variants: Content targeted at different regions but in the same language may be indexed separately, creating duplicate content concerns. For instance, content tailored for the Canadian and U.S. markets might inadvertently exist as distinct entities.

- Content for the U.S. Market:

- https://www.examplestore.com/us/products

- Content for the Canadian Market:

- https://www.examplestore.com/ca/products

- Content for the U.S. Market:

- Indexing of Search Results Pages: Search results pages being indexed can lead to duplicate content issues. These dynamic pages may generate different results based on user queries, but if indexed, they can contribute to content duplication.

- Original Search Page:

- https://www.examplesearch.com/search

- Search Results for Query “Keyword A”:

- https://www.examplesearch.com/search?q=KeywordA

- Search Results for Query “Keyword B”:

- https://www.examplesearch.com/search?q=KeywordB

- Original Search Page:

- Staging/Testing Environments: Staging or testing environments being indexed is a common oversight. Content in these environments, intended for development purposes, may unintentionally become accessible to search engines, leading to duplicate content concerns.

- Print-Friendly Versions: Print-friendly versions of web pages being indexed can result in redundant content, as these versions typically mirror the primary content but lack the formatting necessary for online display.

- Scraped or Copied Content: Even if your content is the original version, if it gets copied by another site with higher authority, its version may be perceived as the original by search engines. This underscores the importance of monitoring and addressing content scraping.

- Manufacturer-Provided Product Descriptions: When it comes to e-commerce sites, the use of manufacturer-provided product descriptions can lead to duplicate content issues, primarily if competing sites sell the same product using identical descriptions.

- Very Similar Content on Your Site: It is a very common instance for some sites to have similar content,e.g., e-commerce stores with similar products. For such sites, trivial differences in product descriptions can still result in duplicate content concerns. It’s crucial to distinguish content to avoid confusion for search engines.

- Overlapping Content in Similar Posts or Pages: Posts or pages targeting similar intent may contain overlapping content, inadvertently creating duplication. Ensuring that content is distinct and tailored to specific user queries is essential for effective SEO.

“Tailoring content to meet the unique needs of individual searchers is not just a best practice but a critical strategy. By doing so, you not only avoid duplication issues caused by inconsistent URL structures but also guarantee that your content provides maximum value to the audience. Each piece of content is a bespoke solution, meticulously crafted to address the diverse queries of users, ultimately contributing to a more meaningful and effective online presence.”

Nimesh Patel, Product Growth Manager @ Multicollab

Identifying Duplicate Content on Your Website

Identifying and addressing duplicate content on your website is crucial for maintaining a solid online presence and optimizing content performance. So, before figuring out how do you fix duplicate content, it is essential to start at the root cause.

Here are strategic approaches for site owners to quickly identify duplicate content issues:

- Google Search Console’s “Coverage” Tab: Google Search Console is a powerful tool that provides valuable insights into your site’s performance on Google search. Utilize the “Coverage” tab within Google Search Console to identify potential issues related to duplicate content. This section highlights errors and problems Google encounters when crawling and indexing your pages. Pay special attention to any reported duplicate content concerns and take steps to rectify them.

- “site:example.com” Search in Google: A simple yet effective method involves running a “site:example.com” search in Google. Replace “example.com” with your domain. This search command displays all indexed pages associated with your site. Compare the results with your expected number of pages. Discrepancies may indicate duplicate content or indexing issues. Examine the snippets provided to identify similarities between pages.

- Dedicated Tools for Duplicate Content Detection: Take advantage of specialized tools designed to identify duplicate content efficiently. Tools like Siteliner and Copyscape are tailored for this purpose: Analyzing websites to highlight duplicate content and broken links, detecting plagiarism, and more.

Regularly employing these strategies will empower site owners to identify and address duplicate content issues proactively. By integrating these practices into routine website maintenance, you can ensure the continued health and optimization of your site’s content, contributing to improved SEO performance and a more seamless user experience.

How Do You Fix Duplicate Content?

Effectively managing duplicate content is a by-product of identifying the duplication source before implementing solutions. This is important because strategies may differ based on whether the duplication occurs across multiple domains or within the same site.

- Canonicalization: This involves specifying the preferred version of a page for search engines. Using the canonical tag guides search engines to index and rank the chosen version, consolidating the ranking signals. This is particularly useful for addressing issues stemming from URL variations or similar content.

- 301 Redirects: Implementing 301 redirects is a powerful method for permanently redirecting one URL to another. This is beneficial when consolidating multiple pages into a single authoritative version. This resolves duplicate content concerns and ensures a seamless user experience by directing visitors to the correct page.

- Hreflang Tags: For websites with content targeting different regions but in the same language, hreflang tags are invaluable. These tags indicate the language and regional targeting of a page, helping search engines deliver the most relevant content to users based on their location.

- Robots.txt, Meta Robots & Noindex: Utilizing the robots.txt file, meta robots tags, and the no index directive can prevent search engines from indexing certain pages. This is particularly useful for content that serves a specific purpose but is not intended for search engine visibility.

- Preferred Domain and Parameter Handling in Google Search Console: Google Search Console offers tools like preferred domain settings and parameter handling to assist in content management. Configuring these options can help prevent Google from crawling and indexing specific content, reducing duplication issues.

- Manual Content Update and Amendment: The most fundamental solution is to manually update and amend duplicate content to provide unique value. While crafting original, high-quality content that adds value to users is crucial, it is even more important to regularly review and update this content to ensure its relevance and uniqueness.

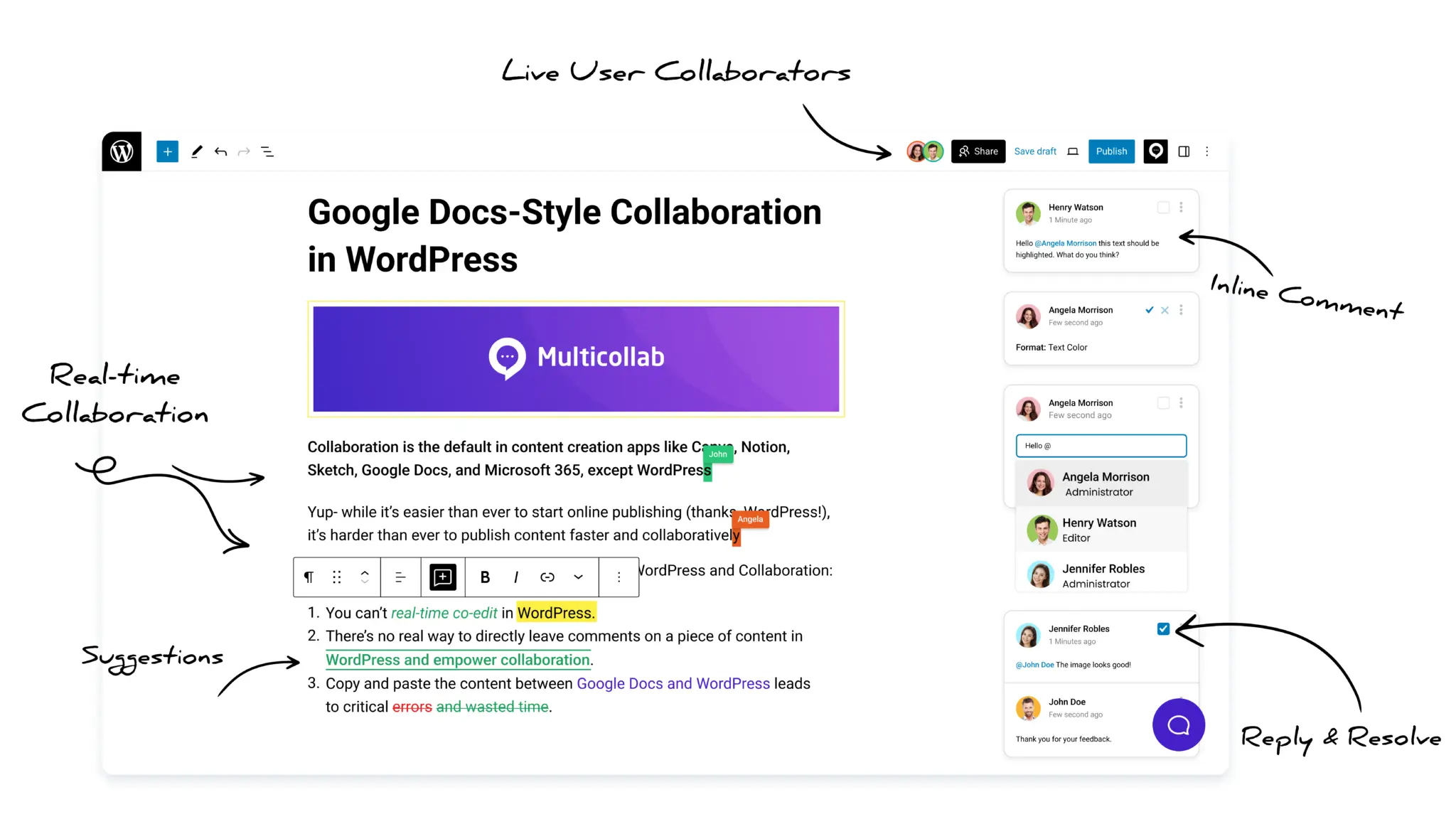

When it comes to manually updating content with the help of a content team, Multicollab proves to be a comprehensive solution that can help combat duplicate content issues. The tool helps improve content management in WordPress and ensures that the team can retain the originality and quality of its content. Bringing all collaborators seamlessly together to contribute, certain features of Multicollab help site owners maximize the quality of their website content.

- Easy editorial collaboration directly within WordPress ensures a streamlined editing and publishing process.

- Site owners and team members can stay updated with the latest edits or suggested changes to posts and pages through email and Slack notifications.

- Multicollab’s Activity Reports help site owners keep track of content edits at a glance, enhancing transparency and accountability within the content creation and editing workflow.

Maximizing Unique Content Creation with Multicollab

Original content is essential for capturing audience attention and gaining search engine favor. It distinguishes your brand, engages audiences with unique perspectives, and establishes authority in your niche. While you might be working hard to ensure that all of your website content is original, ensuring it does not fall prey to content duplication is an added responsibility that cannot be overlooked. Duplicate content poses a significant challenge for website owners, impacting their SEO efforts and online visibility.

The early identification and resolution of duplicate content issues is paramount to optimize website performance. While awareness of common causes of duplicate content is a prerequisite, having a tool like Multicollab can be a game changer. Facilitating seamless collaboration directly within WordPress, the tool streamlines the editing and publishing process with email and Slack notifications and quick snapshot reporting to keep all collaborators updated on the latest edits and changes.

To keep duplicate content in check and maximize the success of your content strategy, get started with Multicollab today and witness the transformation of your content optimization.